The Ethical Implications of AI in Society

AI is booming. It is revolutionising businesses and how individuals engage with technology, thus changing the very essence of technology. AI will provide significant advantages in health care, finance, education, and law enforcement. However, its growing application also brings ethical challenges. That needs to be solved to ensure responsible AI development. These developments call for

AI is booming. It is revolutionising businesses and how individuals engage with technology, thus changing the very essence of technology. AI will provide significant advantages in health care, finance, education, and law enforcement. However, its growing application also brings ethical challenges. That needs to be solved to ensure responsible AI development. These developments call for our attention to things like bias and privacy, accountability, and the future of work. That will prevent us from causing unintended consequences.

This article examines critical ethical issues related to AI. It discusses the risks involved. Also, it will discuss how businesses, policymakers, and society should develop responsible AI practices.

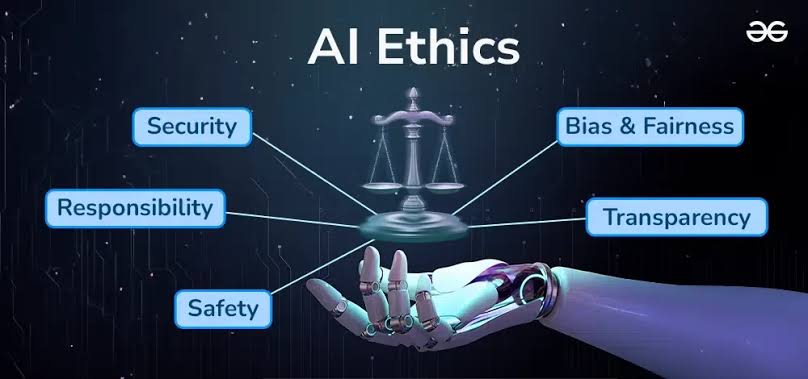

Understanding AI Ethics

What is AI Ethics?

AI ethics are about the creation and use of guideposts for our AI technologies. Reducing harm is part of ethical AI, which is its discipline. It balances fairness, transparency, and accountability in decision-making. It aims to enable AI that respects human rights. It should be inclusive and be free of discrimination.

Why AI Ethics Matters

With AI systems carrying more weight in human lives, ethical issues can take an even more significant role. Without control over AI systems, we risk bias, less privacy, and less accountability in our systems. Ethical AI ensures technology works for humanity while maintaining trust and fairness.

AI ethics are key to creating sustainable AI systems for everyone. They help prevent misuse by people or groups, including using AI for harmful purposes like misinformation, surveillance, or manipulation.

Major Ethical Concerns in AI

Bias and Fairness in AI

One of the most pressing issues in AI ethics is bias. AI systems learn from past data. If that data is biased, the AI might copy and even increase those biases.

Causes of AI Bias

- Imbalanced Training Data: AI models trained on biased datasets reflect societal inequalities.

- Algorithmic Design: The choice of algorithms can inadvertently favour certain groups over others.

- Human Oversight: Lack of diverse perspectives in AI development teams leads to unintentional bias.

Examples of AI Bias

- Hiring Algorithms: Some AI-driven hiring tools have been shown to favour male candidates over female ones.

- Facial Recognition: Studies have found racial and gender biases in facial recognition software. Leading to misidentifications.

- Loan and Credit Approvals: AI-powered financial systems may disproportionately reject applicants from marginalised communities.

- Healthcare Disparities: AI-driven diagnostic tools may be less accurate for certain demographics due to insufficient diverse data in training sets.

Privacy and Data Security Concerns

AI systems need a lot of data to work well. This raises big privacy concerns.

Key Privacy Risks

- Surveillance and Monitoring: AI-powered surveillance tools can track individuals without their consent.

- Data Misuse: Companies collecting personal data for AI training may sell or exploit it without users’ knowledge.

- Hacking and Security Breaches: Cybercriminals see AI databases as prime targets. This puts sensitive information at risk.

- Predictive Analytics Concerns: AI can predict user behaviour. This may lead to invasive ads, unfair pricing, or government spying.

To reduce these risks, laws like the General Data Protection Regulation (GDPR) in Europe set strict data privacy rules. This ensures that companies manage AI data responsibly.

Transparency and Accountability

Many AI systems are like “black boxes.” This means we can’t easily understand how they make decisions. This lack of transparency leads to accountability issues.

Problems with AI Transparency

AI decisions can be hard to grasp, making it tough to judge fairness.

- Legal and Ethical Accountability: If an AI system causes harm, it’s unclear who is responsible. Is it the developer, the organisation, or the AI itself?

- Public Trust: People may distrust AI applications without transparency, slowing adoption.

- AI in Law Enforcement: AI-driven predictive policing raises ethical issues. Biased data can cause wrongful profiling and unfair targeting of communities.

The Future of Work and Automation

As AI automates more tasks, concerns about job displacement and economic inequality arise.

AI’s Impact on Employment

- Job Displacement: Routine and manual jobs are increasingly at risk of automation, particularly in industries such as manufacturing, transportation, and customer service.

- New Job Creation: AI creates jobs in fields such as AI development, data science, and cybersecurity.

- Reskilling and Upskilling: Workers must adapt to an AI-driven job market through continuous learning and retraining programs.

- Economic Inequality: If AI primarily benefits large corporations and tech firms. It could widen the wealth gap, leaving certain workers behind.

- Human-AI Collaboration: Ethical AI should enhance human jobs, not replace them entirely. It should ensure that AI remains a tool for empowerment rather than a source of disruption.

Developing Responsible AI

Ethical AI Principles

To build responsible AI, companies and governments should adhere to key ethical principles:

- Fairness and Non-Discrimination: AI should be designed to treat all individuals equitably.

- Privacy Protection: Data collection and usage should prioritise user privacy and consent.

- Transparency and Explainability: AI systems should provide clear explanations for their decisions.

- Accountability: Developers and organisations should be responsible for AI outcomes.

- Human-Centric Approach: AI should enhance human well-being rather than replace human judgement.

- Security and Safety: AI systems must stop misuse and guard against cyber threats.

Regulations and Policies for AI Ethics

Governments and international organisations are working to regulate AI ethics.

Notable AI Regulations

- EU’s AI Act: Aims to regulate high-risk AI applications and ensure ethical compliance.

- GDPR (General Data Protection Regulation): Governs data privacy in AI-powered applications.

- AI Ethics Guidelines (OECD and UNESCO): Provide global frameworks for ethical AI development.

- The White House AI Bill of Rights: Sets ethical AI rules in the U.S. to keep citizens safe from algorithmic harm.

Corporate Responsibility in AI Development

Businesses deploying AI must implement ethical guidelines, such as:

- Diverse AI Development Teams: Encouraging inclusivity to reduce bias.

- Regular Audits and Testing: Ensuring AI systems remain fair and accountable.

- Public Engagement and Transparency: Let users know how AI impacts them. Also, get their informed consent.

- AI Impact Assessments: Evaluating how AI technologies may affect different groups before deployment.

The Path Forward: Balancing Innovation with Ethics

AI can significantly improve society. However, we must address ethical concerns to share its benefits fairly. Promoting responsible AI development is key for businesses, governments, and individuals. It aims for fairness, transparency, and accountability in their systems.

Policymakers, researchers, and industry leaders must collaborate to tackle ethical AI concerns. Investing in AI literacy, oversight, and safety will create a fair and creative AI world.

Conclusion: The Importance of Ethical AI for Society

The rise of AI presents both opportunities and challenges. Ethical AI is essential. It prevents harm, defends rights, and ensures AI helps people instead of taking advantage of them. We can have a future where technology allows everyone. Responsible AI practices will ensure fairness, privacy, and human dignity. As AI evolves, we must prioritise ethical oversight. This ensures its growth matches societal values and supports global well-being.